The Historical Evolution of Machine Intelligence

The history of machine intelligence and how it has evolved over different eras:

Reference: Image from the introduction to the book 《深度学习》,ISBN:9787115461476.

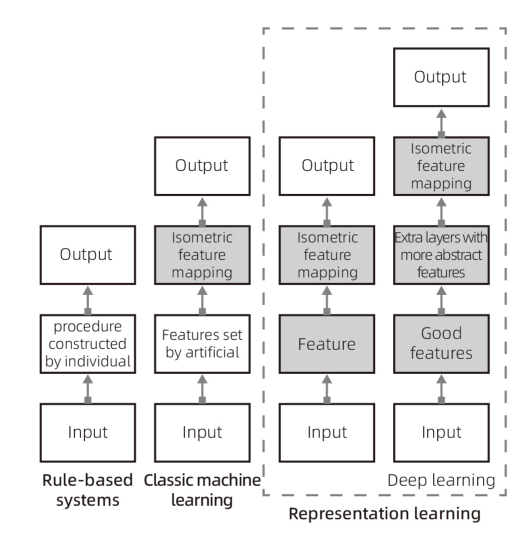

In the history of machine decision-making, the earliest approach was rule-based systems. The decision outputs of such systems were entirely based on the developer’s inputs and the designed data processing formulas (algorithms) or procedural schemes. In modern software development, rule-based systems are frequently used and can be considered the foundational framework for building traditional computer software. However, this framework struggles to make decisions for datasets with diverse data types and large volumes, as developers cannot provide a complete and unified algorithm or formula to map the relationship between these data and the output goals during development. Thus, such systems are typically only applicable to programs with simple input data and straightforward input-output relationships.

In the 1990s, a simple machine learning algorithm based on logistic regression emerged, which could determine whether a pregnant woman should undergo a cesarean section based on a series of input parameters or real-world conditions (Mor-Yosef et al., 1990). During the 1980s and 1990s, various machine learning approaches emerged, which can all be classified as classical machine learning, as shown in the diagram above. Similar machine learning algorithms can be used to distinguish between spam emails and legitimate ones, or to predict reasonable house price ranges for a specific area based on regional housing data. However, these machine learning approaches relied on formalized, abstracted datasets derived from quantified features of real-world scenarios, represented as numbers or binary (true/false, 1/0) values. Returning to the example of the pregnant woman, such machine learning solutions could analyze quantified feature data to reach diagnostic conclusions, but could not make a final decision on whether a cesarean section was necessary based on a CT scan or ultrasound image. In contrast, human doctors rely on interviews, physical examinations, and analysis of CT or ultrasound images for diagnosis. Interviews, physical examinations, and image analysis are non-formalized, unlike the structured, quantifiable information in data tables. The difference in the types of information used highlights the fundamental distinction between simple machine learning decisions and human decision-making. Compared to rule-based systems, these machine learning approaches could handle decision-making tasks with diverse data types, large data volumes, and non-intuitive input-output relationships.

Entering the 1990s, deep learning began to emerge, demonstrating greater versatility and application value compared to classical machine learning. In classical machine learning, non-formalized scenarios, such as image or speech recognition, remained insurmountable challenges. However, deep learning decision-making systems gradually overcame these barriers in areas such as image recognition and text recognition. For tasks like image recognition, deep learning strategies no longer require manual definition and configuration of features or the construction of datasets, as was necessary in classical machine learning. Over time, the structure of deep learning began to resemble the form of the human brain—not because early deep learning strategies were modeled on the brain’s mechanisms, but because this structural similarity enabled deep learning to perform better in non-formalized decision-making scenarios and exhibit characteristics akin to human decision-making (e.g., processing language, images, and other non-formalized information). Today, models under the deep learning framework have not only achieved breakthroughs in image and text recognition but have also excelled in higher-level applications such as autonomous driving, complex video game competitions, text generation, and speech translation.

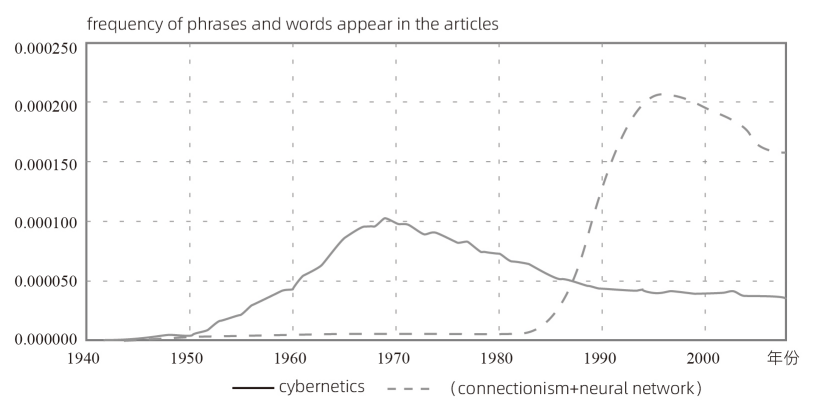

Based on the frequency of phrases like “cybernetics,” “connectionism,” or “neural networks” in Google Books, the history of artificial neural network research can be divided into three waves (the diagram shows the first two waves, with the third wave emerging more recently). The first wave, from the 1940s to the 1960s, was driven by cybernetics, fueled by the development of biological learning theories (McCulloch and Pitts, 1943; Hebb, 1949) and the implementation of the first models, such as the perceptron (Rosenblatt, 1958), which enabled the training of a single neuron. The second wave, from 1980 to 1995, involved connectionist approaches, which could train neural networks with one or two hidden layers using backpropagation (Rumelhart et al., 1986a). The current third wave, deep learning, began around 2006 (Hinton et al., 2006a; Bengio et al., 2007a; Ranzato et al., 2007a) and appeared in book form by 2016. Notably, the first two waves similarly appeared in books much later than their corresponding scientific activities.

Reference of figure and description: introduction to the book《深度学习》,ISBN:9787115461476.

From rule-based systems to classical machine learning to deep learning, the evolution of technological approaches generally followed a progression from cybernetics to connectionism. Under the cybernetics framework, system rules, algorithms, and processes were fully constructed, and the entire process from input to output was entirely knowable, monitorable, and controllable, with outputs exhibiting more linear characteristics. In contrast, connectionism, as described by Wikipedia, holds that mental and psychological phenomena can be described by networks of simple, often uniform units, where the units and their connections can be represented as neurons and synapses (https://zh.wikipedia.org/wiki/联结主义). As systems evolved toward connectionism, the intermediate processes from input to output became more complex, and the randomness of the processing increased significantly, making most connectionist processes unknowable, uncontrollable, and difficult to monitor.

In classical machine learning systems, I believe they still retain some elements of cybernetics, as their processing procedures, algorithm selections, and feature choices are still planned, though they begin to exhibit some randomness in their path selection. In deep learning systems, the process of feature extraction is eliminated, further reducing the proportion of human-defined and subjective settings.

As deep learning developed, its application potential and versatility far surpassed rule-based systems grounded in cybernetic logic. Unlike traditional machine learning and rule-based systems, deep learning performs better as data volume increases. In contrast, traditional machine learning reaches a performance peak, after which additional data does not yield further improvements. This does not mean that cybernetics is useless; while it struggles in non-formalized, highly complex, and non-linear scenarios, it remains critical in areas such as industrial automation, software management, and enterprise management. For instance, standardized assembly lines and software data flow management are ideal applications for cybernetics. However, in scenarios like marketing, design, creative development, language communication, or on-site translation, a cybernetic framework would require engineers and managers to standardize these scenarios (e.g., by setting quantifiable KPIs), extract feature data, and manage them within a standardized cybernetic system. Yet, these scenarios are inherently non-formalized and non-standardized, and forcibly applying formalized methods to them can lead to significant distortions and biases in subsequent management.

In summary, for abstract, formalized tasks, traditional computer programs can easily outperform the human brain. Conversely, humans have an advantage in non-formalized tasks. The ability to handle non-formalized problems is a key indicator of a system’s intelligence level and its manifestation of intelligence. The stronger a computer’s ability to process non-formalized problems, the more intelligent it is. With the introduction of connectionism, more non-formalized, variable, and non-standardized scenarios gained new potential solutions, further expanding humanity’s toolkit for addressing real-world challenges.